When Code Stops Being Scarce

For most of modern software history, code was the scarce resource. Engineering organizations reflected that constraint: hire more developers, layer in specialization, add process to manage throughput, and protect the people who could translate ideas into working systems. Velocity was a function of coordinated human effort.

AI changes the constraint. The question is no longer how many engineers you can deploy, but how effectively your organization converts intent into durable, governed outcomes. Judgment, system design, integration discipline, and clarity of purpose now define the bottleneck.

When code stops being scarce, effort no longer determines advantage. Structure does.

Reshaping the Outcome Pod for the AI Era

To me, an outcome pod is 6–10 resources aligned to a business result: engineering, product, UX/UI, sometimes analytics or domain specialists. It is a cross-functional unit accountable for a measurable slice of the business.

That alignment model still holds. What changes is production economics. AI compresses build time, iteration cycles, and handoffs. The same outcome can now be delivered by a focused team of 3–5. This is not about cost cutting. It is about reducing mechanical overhead and tightening the loop between intent and system change.

That compression elevates the Engineering Manager and Pod Lead. They must operate as systems thinkers—defining architectural constraints, enforcing interface contracts, and designing guardrails within which AI operates. AI can generate artifacts quickly. Humans remain accountable for coherence, safety, compliance, and long-term maintainability.

Cross-domain complexity does not disappear. It intensifies. Engineering Managers must continue coordinating across domains to ensure AI-driven changes in one area do not introduce instability in another.

There is a temptation to staff pods only with senior leads and assume they can “run everything with AI.” That creates fragility. Sustainable organizations invest in mid-level and junior engineers to protect resilience and build a growth pipeline. Companies scale by splitting pods. If every pod is top-heavy, there is no internal bench to seed expansion, no shared engineering DNA, and no distributed ownership capable of questioning AI-generated assumptions or detecting system drift.

Talent, Incentives, and Rituals Must Shift Together

Operating rituals are the first place the shift becomes visible. The traditional standup—what you did yesterday, what you’ll do today, what is blocking—assumes that human effort is the pacing function. In an AI-enabled workflow, risk rarely appears as a visible blocker. It surfaces as architectural deviation, hidden assumptions, or unintended system side effects.

Scrum becomes less a status ritual and more an alignment and inspection loop. It begins with intent: what changed in the business, what new demands emerged, and which are cross-domain. It surfaces integration implications early. It explicitly inspects AI behavior—what was generated, how it maps to architectural patterns, whether it respects domain boundaries, and whether constraints or prompts require refinement.

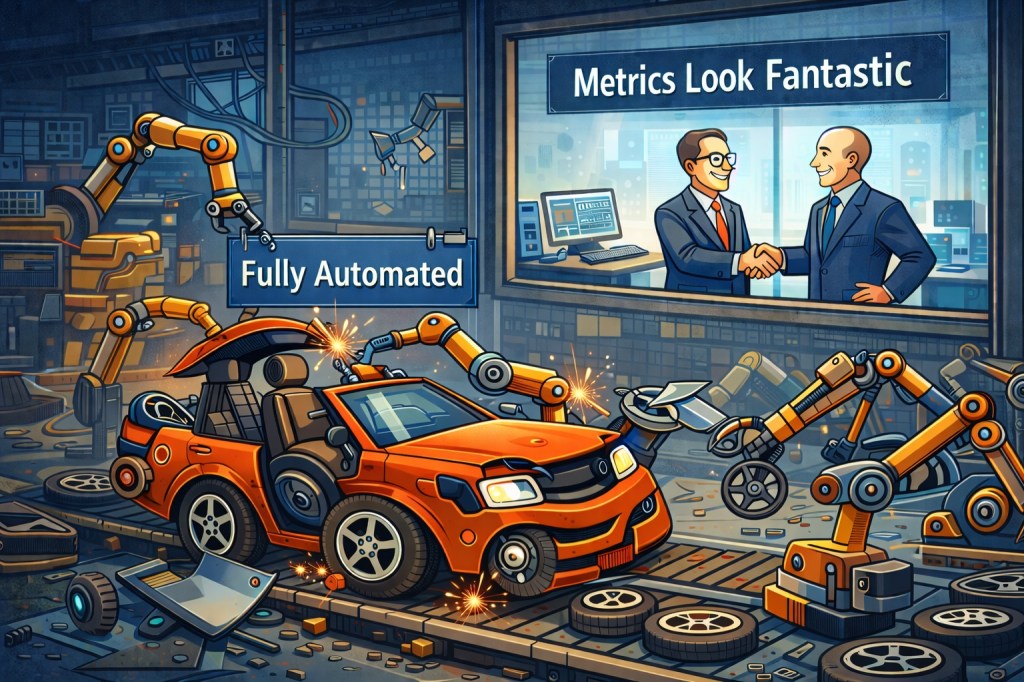

Metrics must evolve as well. DORA+ metrics no longer provide the signals they once did. AI can improve throughput and deployment frequency mechanically while quietly increasing structural complexity and systemic risk.

When generation becomes cheap, code risks becoming disposable—produced quickly, accepted quickly, and replaced just as quickly without architectural intention. Disposable code erodes clarity, increases coupling, and burdens future change. Architecture prevents acceleration from devolving into churn.

Organizations must elevate architectural review and intentional design, enforcing alignment with domain models, layering principles, and long-term system evolution.

Incentives must reinforce that discipline. If teams are rewarded for speed alone, AI will amplify short-term gains and long-term instability. If they are rewarded for durable systems, clear ownership, and predictable outcomes, AI becomes a force multiplier for disciplined engineering.

The AI Ecosystem Is the New Infrastructure Layer

In the past, application infrastructure determined delivery reliability. CI/CD, observability, testing frameworks, and cloud primitives formed the backbone of execution. They governed how software moved from idea to production and how risk was contained once it got there.

AI now requires its own structured ecosystem. Workflows must orchestrate multiple agents, and those agents must interact cleanly with domain services and their underlying data stores. Interfaces need to be shaped so models can reason predictably rather than probabilistically wander. Logging, traceability, validation layers, and approval gates must be embedded from the start so generated changes can be understood, audited, and refined over time. Without that structure, AI introduces variability where reliability used to live.

Security becomes central within this layer. There is a natural impulse to give AI broad access—full repository visibility, production data, unrestricted API permissions—because more context often improves output quality. That instinct, while understandable, is strategically unsound. AI systems must operate under explicit least-privilege principles, with carefully scoped access to data, write permissions, deployment rights, financial systems, and customer records. Not every agent should see sensitive data, and not every workflow should have the authority to transact or deploy without deliberate human approval.

These boundaries are not implementation details. They are risk-management decisions. At the executive level, the question is not whether AI increases speed; it is whether the organization has designed a system in which increased speed does not translate into increased systemic risk. Companies that treat AI security and governance as foundational architecture rather than an afterthought will be the ones that scale safely.

Where You Place Your Bets Determines Your Leverage

AI does not reward the largest organizations; it rewards the most deliberate ones. When code becomes abundant, teams can generate authentication flows, dashboards, internal tools, and entire service layers faster than ever. The danger is not speed itself. It is the quiet accumulation of disposable systems that feel efficient in the moment but create long-term drag—systems that must be maintained, secured, evolved, and eventually untangled. Without strategic clarity, AI amplifies undifferentiated output and accelerates architectural sprawl.

The executive question therefore shifts from “How do we move faster?” to “Where does speed matter?” Effort should concentrate on proprietary domain logic, differentiated workflows, unique data models, and system behaviors that define competitive advantage. Speed applied to undifferentiated capability is noise. Speed applied to what makes you distinct is leverage.

In an environment where generation is cheap, judgment becomes the scarce asset. Leverage emerges from how intentionally that judgment is allocated—what the company chooses to build, what it refuses to build, and how rigorously it protects the systems that matter most. AI expands capability, but only deliberate structure converts expanded capability into sustained advantage.