Part 1 of a three-part series on redesigning the software delivery lifecycle for AI-augmented engineering.

The right team size is not a fixed number. It is a function of what a single engineer can produce and what it costs to coordinate the rest.

For most of the last two decades, the golden pod size was four-to-eight engineers. That range was not arbitrary. It was the sweet spot for both throughput and return on investment. Below four, you were leaving capacity on the table. Above eight, every additional engineer cost incrementally more in coordination than they added in output. Handoffs multiplied. Alignment meetings grew. Shared context had to be rebuilt every time someone joined or rotated out. The curve was real, and most organizations found it the hard way.

AI has changed the individual side of that equation. One engineer working alongside capable tooling produces meaningfully more than one engineer working alone did three years ago. The deterministic scaffolding, the routine translation between layers, the boilerplate that used to consume hours now collapses into minutes. Per-engineer throughput is up, which means fewer engineers are needed to produce the same output, which means coordination overhead drops on the other side of the equation. The sweet spot has moved. What used to take four-to-eight now takes two-to-three.

But the activities AI accelerates are not the activities that require judgment. Oversight, architectural reasoning, contextual interpretation, and the decisions about what to build and what to leave out have not compressed. If anything, they matter more, because compressed delivery means compressed time to catch what AI gets wrong.

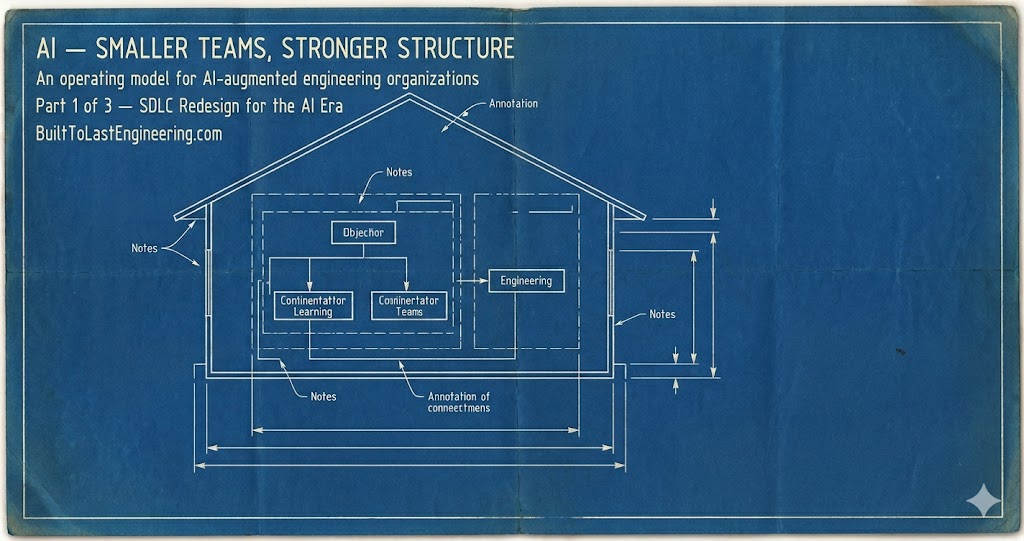

This is the operating model the moment is asking for: smaller delivery pods, tiered ownership through parent domains, and technical guilds as horizontal centers of excellence. Not because small is better than large. Because the work has changed shape, and the structure has to change with it.

Software engineering is not deterministic, and AI does not change that

Before going further, it is worth being clear about what AI is and is not doing.

Software engineering is situational. It is shaped by the organization that produces it, the constraints of the business it serves, the history of the codebase, the maturity of the teams, and the trade-offs the company has already made and forgotten about. Two engineers given the same requirement will produce different systems, and both can be right depending on context. If software engineering were deterministic, we would already have automated most of it. We have not, because it is not.

AI has not changed this. Self-driving cars, the most heavily funded application of AI in the physical world, still drive through active crime scenes, stop in the middle of intersections and refuse to move, get confused by puddles, and block traffic for hours waiting for a human to intervene. These are not edge cases discovered by adversarial testing. They are routine failures on city streets in 2026, with billions of dollars and a decade of training data behind the systems. The problem is not that the models are not capable. The problem is that real environments are non-deterministic, and non-deterministic environments require judgment, not pattern matching.

Software is the same kind of environment. The code itself may be deterministic, but the decisions about what to build, how to bound it, what to leave out, what to integrate with, and what to deprecate are not. AI is excellent at producing plausible output inside a well-defined frame. It is not excellent at deciding what the frame should be. That decision still belongs to humans, and the operating model has to be designed around that fact.

The implication is straightforward. AI accelerates the deterministic parts of delivery. It does not accelerate the judgment parts. A team designed for the new economics is small where delivery happens and strong where judgment happens. That is the model.

The pod: two or three engineers owning a bounded context

The atomic unit is the pod. Two or three engineers, owning a single bounded context end-to-end. Not a feature team. Not a squad with shifting scope. A pod with a domain, a set of services or modules that express that domain, and the operational responsibility for what it produces.

The pod works because AI has raised what a single engineer can produce, which means the pod needs fewer people to do what a larger team used to do, which means less of the pod’s time gets spent coordinating with itself. One engineer can hold the design conversation, one can move through implementation with AI handling the deterministic scaffolding, and one can carry the review and operational load. Roles rotate. The pod does not need a project manager, a dedicated tester, or a separate operations contact, because the activities those roles existed to coordinate have either been absorbed into the pod itself or compressed into work the pod can handle directly.

The pod does not scale by adding engineers. This is the part that surprises people who assume AI just makes existing team math better. It does not. AI has already changed the scope of what each engineer can ship in one iteration. What used to be a single story is now several. What used to take a small sub-team across a sprint can now move through two or three engineers in the same window. The unit of change per cycle has gotten bigger, not smaller. Adding more engineers to the same pod means stacking those broader scopes on top of each other in the same domain in the same cycle. Domain ownership means every engineer is already touching the same code, so adding people does not parallelize the work — it puts more hands on the same modules and recreates the coordination overhead the pod was designed to eliminate. And once multiple engineers are shipping concurrent broad-scope changes into the same domain in the same cycle, attribution breaks down. When a metric moves, you cannot cleanly say which change caused it. The signal degrades exactly when you need it most. The pod is small not because small is virtuous, but because the work it owns has a natural ceiling on how much change can move through it coherently in one cycle, and AI has already raised what each engineer brings to that ceiling.

If you need more throughput consistently, you do not grow the pod. You draw new domain boundaries and stand up another pod. This is why the pod-of-pods structure matters. It is not an organizational nicety layered on top of the delivery model. It is the throughput-scaling mechanism. Capacity grows by carving the domain more finely, not by inflating pod size. And the same structure that enables that carving is the structure that produces the leadership bench, because every new pod creates a new senior seat and a new second-in-command behind it. Throughput and leadership development are produced by the same act of drawing the boundary. The pod-of-pods is the backbone of both.

The pod is also the first rung of the leadership bench. With two or three engineers, the senior and second-in-command dynamic is not theoretical. The senior is already preparing the second to take their seat, because the pod is small enough that succession matters operationally. Rotation, vacation, and growth all create the moment where the second has to lead, and the senior has to have prepared them for it. This is not a side effect of pod size. It is one of the reasons the structure works. The mentorship is built into the geometry.

Two failure modes are worth naming up front, because both are predictable and both are addressed by the rest of the structure. The first is fragmentation: small pods drift, accumulate local decisions that conflict with each other, and produce a system that looks coherent at the pod level and incoherent at the system level. The second is isolation: a two-person pod loses one engineer to illness or attrition and becomes a one-person team with a bus factor of one. The parent domain and the guild are the structural answers to both.

The parent domain: where senior judgment lives

At scale, pods do not live in isolation. They roll up into a parent domain that represents a coherent business problem space. Payments is a pod. Ledger is a pod. Reconciliation, billing, and disbursements are pods. They are not independent products. They are facets of a Finance domain, and the Finance domain has its own leadership and its own architecture.

The parent domain leadership is where directors, principal engineers, solutions architects, and distinguished engineers live. Their job is not to manage the pods day-to-day. Their job is to own the shape of the domain: how the bounded contexts fit together, where the seams are, which integrations are strategic and which are tactical, and what the domain looks like a year from now. They make the decisions that no individual pod has the altitude to make, and they make them with the technical depth that the work requires.

This role evolves with the domain. Early on, the parent domain is often a single pod. There is no separation yet between the domain and the team because there is nothing to separate. The leader is a hands-on engineer running the whole domain. As throughput needs grow and new sub-domains get carved out, the original pod becomes the parent and the leader’s role starts to shift. At two or three pods, the leadership is still hands-on. It looks and behaves like a delivery team. The leader is still reviewing code, still in the implementation conversations, still close enough to the work to be a contributor. As the domain matures and more pods spin up underneath it, that role transitions further. The leader becomes a solutions architect, a technical strategy function, the person who owns the domain-level decisions but no longer ships code directly. Sometimes that is the same person growing into a larger role. Sometimes the role splits, with a director owning people and a principal engineer owning architecture. Both work. What matters is that the senior depth is real, and it is positioned where it can shape the domain rather than getting absorbed into individual pod execution.

The structure itself emerges the same way. As the work grows, sub-domain pods get carved out of the original. The same thing can happen again at the next layer: a sub-domain pod that has grown enough to need its own sub-divisions becomes a parent in its own right. The pod-of-pods structure is recursive, and the depth of the tree is determined by the complexity of the work, not by an organizational template imposed from the top. What matters is that the boundary-drawing is deliberate. New pods are not stood up because someone needs a team. They are stood up because a bounded context has emerged that deserves its own ownership.

Sometimes the boundaries also need to be redrawn. This is the part most operating models refuse to acknowledge. What seemed like one domain at the start turns out to be three. What looked like three independent domains share so much underlying logic that they should be consolidated. Boundaries are hypotheses about how the work decomposes, and hypotheses sometimes need to be revised in the face of evidence. The right way to think about this is tree balancing. The pod-of-pods structure is a tree, and trees perform poorly when they get lopsided. When one parent domain accumulates too many sub-pods while its siblings stay shallow, coordination concentrates in the wrong place. The leader of the heavy branch becomes a bottleneck. The leaders of the light branches lose context because everything important is happening somewhere else. Throughput degrades not because anyone is doing anything wrong, but because the shape of the tree no longer fits the distribution of work. Rebalancing means splitting heavy branches when a sub-domain has grown enough to deserve its own parent, and consolidating light branches when separate ownership is no longer justified. Both are balancing operations. Both require the same discipline: looking at the structure honestly and being willing to restructure when the shape no longer serves the work. Paper leaders defend the org chart they inherited. Real leaders are willing to redraw it when the work demands it.

The parent domain is also the single point of coordination with the rest of the organization. Product, finance, compliance, security, and every other function that needs something from engineering talks to the parent domain leader, not to individual pods. The leader translates the request into what it actually means for the architecture, negotiates scope and trade-offs at the right altitude, and then translates the decision down to the pods that need to act on it. This is one of the most operationally valuable functions of the parent domain, and it is the easiest one to overlook. Without it, pods get pulled into stakeholder meetings that are not theirs to attend, senior engineers lose hours to context-switching across unrelated asks, and the work fragments. The parent domain absorbs the coordination load deliberately so the pods can stay in the domain. It is also why the parent domain leader has to be technically credible rather than a routing layer. Translating business intent into technical direction is a judgment activity, not a forwarding activity.

The bench compounds through the layers. A senior who has grown into pod leadership becomes the second-in-command at the parent domain. The parent domain leader is preparing them for parent-domain leadership the same way they were once prepared for pod leadership. Same mechanism, different altitude. This is how the pod-of-pods design produces leaders rather than just delivering software: every layer is both a delivery unit and a mentorship environment, and the second-in-command relationship is the through-line that makes succession real at every level.

This is the part of the model that the “flat org with AI” crowd cannot answer. They have a story about delivery and no story about where senior engineers go, what they do, or how the bench gets built. The pod-of-pods structure is the answer. It is where senior careers actually live in an AI-augmented organization, and it is what prevents the pod model from collapsing into a network of clever individuals with no architectural coherence.

Guilds: centers of excellence, not committees

The third layer is the guild. Guilds are horizontal. They cut across pods and parent domains, and they exist to maintain practice consistency in a specific technical area: backend, frontend, data engineering, mobile, platform, security. Without guilds, twelve pods will solve the same backend problem twelve different ways within a year, and the organization will spend the next three years paying for the divergence.

The important word is excellence, not committee. Guilds are not gatherings where representatives meet weekly to debate standards and produce nothing. They are centers of excellence that own the practice itself. They publish the patterns. They maintain the reference implementations. They write the guides for how backend services get built at this company, what tooling is supported, what trade-offs have been made and why. Architects engage through the guild as a continuous practice, not as a gate at PR time or as a postmortem participant after something has gone wrong. The guild is where architectural intent gets encoded before code gets written, not where it gets enforced after.

This is the inversion that matters. In most organizations, architects are reactive. They show up when something is broken, when a design is being escalated, or when someone needs a sign-off. That model wastes their experience and frustrates the engineers who only encounter them under adversarial conditions. The guild model puts architects in the production loop of standards and patterns, where their experience compounds rather than gets spent on triage.

Guilds are also where experimentation lives. Engineers do not stop wanting to try new things, and organizations cannot afford to either freeze the stack or let every pod adopt whatever it finds interesting. The guild is the structural answer to both failure modes. It is the place where new technology gets evaluated honestly, with the technical depth to know what is real and the cross-pod visibility to know whether it solves a problem the organization actually has. The guild decides what is worth piloting, runs the pilot, learns from it, and makes the call on when something graduates from experiment to standard, and when it does not. This is how technology adoption stays intentional instead of drifting. The guild owns the question of when to invest in a rollout, what it replaces, and what the migration path looks like. Pods do not make that call alone. Parent domains do not make it for the whole organization. The guild does, because the guild is the only structure that holds the technical depth, the practice ownership, and the cross-cutting view at once.

How the three layers hold each other up

The three layers are not independent. They are mutually reinforcing, and the model only works when all three are present.

Pods deliver, but they cannot see across themselves. The parent domain provides the cross-pod view and the senior judgment that no individual pod can produce. The parent domain owns the shape of the problem space, but it cannot enforce consistency of practice or technology direction across unrelated domains. The guild does that. The guild sets technical direction and governs experimentation, but it does not own delivery and cannot make domain-shape decisions. The pod and the parent domain do that.

Each layer prevents a specific failure. Without pods, you have coordination overhead that AI has made unnecessary. Without parent domains, you have fragmentation and a missing destination for senior careers. Without guilds, you have practice divergence and unintentional technology drift, both of which scale into long-term cost.

The structure is also how the leadership bench gets built. The bench is not produced at one layer and then promoted to another. It runs through every layer at once. Inside the pod, the senior prepares the second-in-command. Inside the parent domain, the leader prepares the senior who is becoming the second-in-command at that altitude. The pod-of-pods design is what makes this continuous. There is always a next seat, and there is always someone being deliberately prepared for it. Paired review, guild participation, and parent-domain mentorship are the mechanisms. The structure is what makes them compound. An organization built this way produces its own senior talent rather than requiring it to be hired in. The pipeline has a destination at every layer, and the destination is real work, not a title.

What this series will cover

This piece has made the structural argument. The next two pieces will make it operational.

Part two will walk through inception-to-delivery in the new model: where AI accelerates, where human judgment is non-negotiable, how plan review replaces standup as the central ritual, why vibe coding belongs at inception and nowhere else, and where the accountability boundary sits when AI is generating code. It will also address the autonomy-first consulting frameworks that are being sold as the answer to AI-era delivery, and why they are paper leadership in a new package.

Part three will cover measurement. The pod model breaks most of the metrics that engineering organizations have leaned on for the last decade. Sprint velocity, story point precision, and individual output measures were already weak signals. In an AI-augmented pod, they are actively misleading. The third piece will lay out what to measure instead: token cost per outcome, estimation accuracy as a diagnostic, the role of long-term velocity trends paired with outcome hit rate, and the principle that no single metric tells the story. The pattern across metrics does. A divergent metric is a question, not a verdict.

The thesis across all three pieces is the same. Software engineering is non-deterministic, AI has shifted what humans need to do but not whether they need to do it, and the organizations that thrive in the next five years will be the ones that redesign their operating model around that reality rather than treating AI as a productivity overlay on a structure that no longer fits the work.